CEG5304����������(xi��)Java/c++�����Z(y��)��

�r(sh��)�g��2024-04-11 ��(l��i)Դ�� ���ߣ� ��Ҫ�m�e(cu��)

Project #2 for CEG5304: Generating Images through Prompting and Diffusion-based Models.

Spring (Semester 2), AY 2023-2024

In this exploratory project, you are to explore how to generate (realistic) images via diffusion-based models (such as DALLE and Stable Diffusion) through prompting, in particular hard prompting. To recall and recap the concepts of prompting, prompt engineering, LLVM (Large Language Vision Models), and LMM (Large Multi-modal Models), please refer to the slides on Week 5 (“Lect5-DL_prompt.pdf”).

Before beginning this project, please read the following instructions carefully, failure to comply with the instructions may be penalized:

1.This project does not involve compulsory coding, complete your project with this given Word document file by filling in the “TO FILL” spaces. Save the completed file as a PDF file for submission. Please do NOT modify anything (including this instruction) in your submission file.

2.The marking of this project is based on how detailed the description and discussion are over the given questions. To score, please make sure your descriptions and discussions are readable, and adequate visualizations are provided.

3.The marking of this project is NOT based on any evaluation criteria (e.g., PSNR) over the generated image. Generating a good image does NOT guarantee a high score.

4.You may use ChatGPT/Claude or any online LLM services for polishing. However, purely using these services for question answering is prohibited (and is actually very obvious). If it is suspected that you generate your answers holistically with these online services, your assignment may be considered as committing plagiarism.

5.Submit your completed PDF on Canvas before the deadline: 1759 SGT on 20 April 2024 (updated from the slides). Please note that the deadlines are strict and late submission will be deducted 10 points (out of 100) for every 24 hours.

6.The report must be done individually. You may discuss with your peers, but NO plagiarism is allowed. The University, College, Department, and the teaching team take plagiarism very seriously. An originality report may be generated from iThenticate when necessary. A zero mark will be given to anyone found plagiarizing and a formal report will be handed to the Department/College for further investigation.

Task 1: generating an image with Stable Diffusion (via Huggingface Spaces) and compare it with the objective real image. (60%)

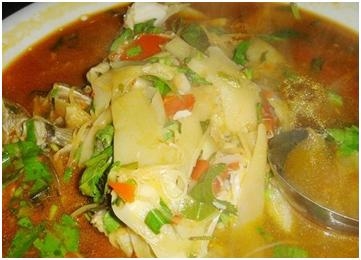

In this task, you are to generate an image with the Stable Diffusion model in Huggingface Spaces. The link is provided here: CLICK ME. You can play with the different prompts and negative prompts (prompts that instructs the model NOT to generate something). Your objective is to generate an image that looks like the following image:

1a) First, select a rather coarse text prompt. A coarse text prompt may not include a lot of details but should be a good starting prompt to generate images towards our objective. An example could be “A Singaporean university campus with a courtyard.”. Display your generated image and its corresponding text prompt (as well as the negative prompt, if applicable) below: (10%)

TO FILL

TO FILL

1b) Describe, in detail, how the generated image is compared to the objective image. You may include the discussion such as the components in the objective image that is missing from the generated image, or anything generated that does not make sense in the real world. (20%)

TO FILL

TO FILL

Next, you are to improve the generated image with prompt engineering. Note that it is highly likely that you may still be unable to obtain the objective image. A good reference material for prompt engineering can be found here: PROMPT ENGINEERING.

1c) Describe in detail how you improve your generated image. The description should include display of the generated images and their corresponding prompts, and detailed reasoning over the change in prompts. If the final improved image is generated with several iterations of prompt improvement, you should show each step in detail. I.e., you should display the result of each iteration of prompt change and discuss the result of each prompt change. You should also compare your improved image with both the first image you generated above, as well as the objective image. (30%)

TO FILL

TO FILL

TO FILL

Task 2: generating images with another diffusion-based model, DALL-E (mini-DALL-E, via Huggingface Spaces). (40%)

Stable Diffusion is not the only diffusion-based model that has the capability to generate good quality images. DALL-E is an alternative to Stable Diffusion. However, we are not to discuss the differences over these two models technically, but the differences over the generated images qualitatively (in a subjective manner). The link to generating with mini-DALL-E is provided here: MINI-DALL-E.

2a) You should first use the same prompt as you used in Task 1a and generate the image with mini-DALL-E. Display the generated image and compare, in detail, the new generated image with that generated by Stable Diffusion. (10%)

TO FILL

TO FILL

2b) Similar to what we performed for Stable Diffusion; you are to again improve the generated image with prompt engineering. Describe in detail how you improve your generated image. Similarly, if the final improved image is generated with several iterations of prompt improvement, you should show each step in detail. The description should include display of the generated images and their corresponding prompts, and detailed reasoning over the change in prompts. You should compare your improved image with both the first image you generated above, as well as the objective image.

In addition, you should also describe how the improvement is similar to or different from the previous improvement process with Stable Diffusion. (10%)

TO FILL

TO FILL

2c) From the generation process in Task 1 and Task 2, discuss the capabilities and limitations over image generation with off-the-shelf diffusion-based models and prompt engineering. You could further elaborate on possible alternatives or improvements that could generate images that are more realistic or similar to the Ո(q��ng)��QQ��99515681 �]�䣺99515681@qq.com WX��codinghelp

��(bi��o)����

�o(w��)���P(gu��n)��Ϣ